There's an algorithm which solves SAT instances in polynomial time, if and only if P=NP. If P=NP, then it runs in polynomial time. If P is not equal to NP, it runs in the best possible non-polynomial time for such a solver.

Levin's original paper

Leonid Levin, working independently with Stephen Cook, discovered the existence of so-called NP-complete problems. These are problems to which any other problem in the complexity class NP can be reduced. NP, of course, stands for "Nondeterministic Polynomial time" (on a Turing machine.)

Levin was working in the Soviet Union at the time, and his 1972-1793 paper is just two pages long. Like many Russian-language mathematical papers, it is terse and does not include the proofs. You can read a version of it here: https://rjlipton.wordpress.com/2011/03/14/levins-great-discoveries/

Levin's theorem

Theorem: For any search problem A(x,y) , there exists an algorithm that solves it in time that is optimal up to multiplication by a constant and the addition of a number polynomial in the length of the input.

On the one hand, this seems like something too obvious to mention. Of course there's a algorithm that achieves it in the optimal amount of time, isn't there?

Well, maybe there isn't. Maybe for a given problem there's a family of solutions, each faster than the next? Maybe an efficiently-computable search problem of the type he's mentioning does not have a computable inverse?

But Levin actually had something more powerful in mind, a procedure called Universal search.

Universal search

Suppose we have a function f that is "easy" to compute, and that we're interested in finding inverses. That is, given the x in f(y) = x, we're interested in finding some y that works. (Levin writes this as a property A(x,y) that can be "tested by an algorithm whose operating time is comparable with the length of x.")

If y exists, for a particular x, then there is some Turing machine that finds it. It might be a Turing machine that outputs just y. It might be a Turing machine that computes some other function which just happens to work as an inverse sometimes. Or it might be an optimal Turing machine for inverting f.

For example, f might be a boolean formula, and x = true. Then we are searching for a variable assignment in the formula that satisfies the formula, i.e., positive solutions to the SAT problem. (In this case, we probably want to include f itself in the input!)

So, why not just try every possible Turing machine, give it input x, and see what happens? (It's an unimaginably-large-number-to-one chance, but it just might work!)

The first problem with trying every Turing machine is that not every Turing machine stops working, and we can't tell ahead of time whether or not this is going to be one of those times a Turing machine runs forever. The second problem is that there are a lot of Turing machines, and trying all the ones that don't work before finding one that does work seems like it could be really slow--- how do we stay optimal "within multiplication by a constant"?

Parallel Turing Machines

We can run all possible Turing machines in parallel in a way that solved both problems. The assumption that makes this work is that Turing machines can be described in a self-delimiting format, or equivalently, that no Turing machine description is a prefix of another Turing machine description. (This is similar to what goes into the definition of Chaitin's constant.)

A simple example of such an encoding is to describe every Turing machine in unary, as a long sequence of 1's followed by a 0. Then there is (at most) one Turing machine of length 1, one Turing machine of length 2, and so on.

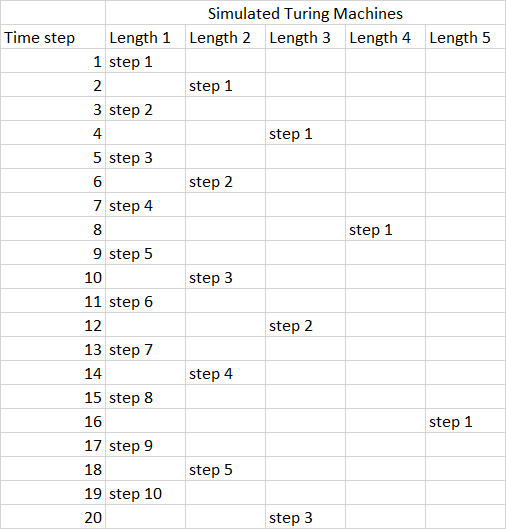

Now, we know that 2^-1 + 2^-2 + 2^-3 + 2^-4 + ... = 2^0; in binary, that's just 0.11111111... = 1, the same infinite series reasoning that shows 0.9999999.... = 1. So, if we spend half of our time simulating one Turing machine, and a quarter of our time simulating another Turing machine, and an eighth of our time simulating another Turing machine, etc., we will always be making progress on all Turing machines. (The same mathematics works out even if you adopt a more sophisticated encoding, as long as it is prefix-free.)

That's what Levin's algorithm does; it spends 2^(-l) of its time simulating Turing machines of length l. Every time one of the Turing machines halts, we look at its output tape; that's a candidate for our desired y. So calculate f(y) and see if it really is x--- if yes, we have an inverse. If not, discard that Turing machine and keep going on all the infinitely many others.

Time complexity of universal search

How long does it take universal search to find an inverse, asymptotically speaking? Suppose there's a program p, of length l(p), that is asymptotically the most efficient algorithm for computing the inverse of f, so it turns in time t(p,x) on input x

Then Universal Search will run in time 2^(l(p)) * ( t(p,x) + t(f,x) ). Every 2^(l(p)) simulation steps we will take one step in p. p will finish computing an inverse in time t(p,x) and halt. Then we will verify the solution using however long it takes to compute f(x). But the (gigantic) factor 2^(l(p)) is a constant, and we assumed that f was "easy" to compute, so this time is just O( t(p,x) ).

It might be that occasionally some unrelated program comes up with the right answer earlier, but this can happen in only finitely many cases. Or, perhaps there is a short program to invert f and a longer but more-efficient program to invert f. But in the long run (and the long run is very, very long) the most efficient algorithm will overcome its size disadvantage and be the first Turing machine to finish with a complete answer.

That is, if a polynomial-time algorithm exists to invert f, Universal Search also runs in polynomial time. In fact, no matter what time is required to invert f, Universal Search will run in constant multiple of that time. It successfully finds and uses whatever Turing machine, or family of Turing machines, are most efficient.

Decision problems vs search problems

There's a small problem, though. What if the inverse doesn't have a solution? Then Universal Search runs forever.

This means it's not an exact match for decision problems like SAT, or the Travelling Salesman Problem, or Graph Isomorphism. It can "efficiently" (if we ignore the huge constant factor) solve all of these problems in the affirmative, but it can't efficiently prove that there is no solution. It's also not good for optimization; we can't tell whether a Turing machine has provided the best possible answer, or just some answer.

But the technique of Universal Search can be expanded to also search for either a solution, or a proof that no solution exists, as long as both can be efficiently verified.

References

Matteo Gagliolo, (2007) Universal search, Scholarpedia, 2(11):2575

Marcus Hutter, The Fastest and Shortest Algorithm for All Well-Defined Problems, International Journal of Foundations of Computer Science, Vol.13, No.3, June 2002, 431-443

L. A. Levin. Universal sequential search problems. Problems of Information Transmission, 9:265–266, 1973.

Congratulations @markgritter! You have completed the following achievement on Steemit and have been rewarded with new badge(s) :

Click on the badge to view your Board of Honor.

If you no longer want to receive notifications, reply to this comment with the word

STOPDo not miss the last post from @steemitboard:

SteemitBoard and the Veterans on Steemit - The First Community Badge.

This post has been voted on by the steemstem curation team and voting trail.

There is more to SteemSTEM than just writing posts, check here for some more tips on being a community member. You can also join our discord here to get to know the rest of the community!

Hi @markgritter!

Your post was upvoted by utopian.io in cooperation with steemstem - supporting knowledge, innovation and technological advancement on the Steem Blockchain.

Contribute to Open Source with utopian.io

Learn how to contribute on our website and join the new open source economy.

Want to chat? Join the Utopian Community on Discord https://discord.gg/h52nFrV

interesting theory and algorithm. Do you think this could be applied in a modified way to steemit data and if so, how?

So, the large constants involve make the idea impractical, however theoretically interesting it might be. But it is possible to do exhaustive search of the space of programs in more restrictive domains; there's been some research, and there's a tool called "superopt" for finding optimal instruction sequences.

With Steemit there's no smart contracts, or even proof-of-work, that makes program optimization interesting on-blockchain. But it might be cool to think about what sort of "search" one might do over, say, all possible SteemSQL queries, if there was a worthwhile and efficient way of evaluating them.

Congratulations @markgritter! You have completed the following achievement on Steemit and have been rewarded with new badge(s) :

Click on the badge to view your Board of Honor.

If you no longer want to receive notifications, reply to this comment with the word

STOP